-

近年来,智能化革命席卷全球,以深度学习为核心的AI技术取得了重大突破。在机器人[1]、语音识别[2-3]、图像识别[4-7]、自然语言处理[8-9]等多个任务上,人工智能技术的识别能力和决策水平已经追平甚至超越人类,如以AlphaGo为代表的人工智能机器人击败人类职业围棋冠军,以Google、百度等工业界为代表的无人驾驶汽车已经开始实际道路上路测试等。

美国斯坦福大学尼尔逊教授将人工智能定义为:“人工智能是关于知识的学科—怎样表示知识以及怎样获得知识并使用知识的科学”[10]。麻省理工学院温斯顿教授认为:“人工智能就是研究如何使计算机去做过去只有人才能做的智能工作”。这些说法[10-11]反映了人工智能学科的基本思想和基本内容,以及其他诸多对人工智能的理解[12-14]。这些观点都反应了人工智能是通过研究人类智能活动规律,构造具有一定智能的人工系统,研究如何应用计算机的软硬件技术来模拟和代替人类某些智能行为(如学习、推理、思考、规划、控制等)的基本理论、方法和技术。

人工智能技术的发展受到了广泛的重视,并在机器人、控制系统中得到了广泛应用,为传统制造业提供了前所未有的发展机遇。汽车行业作为传统制造业的龙头之一,也立足自身发展,结合智能化技术,展开了传统汽车面向智能化革新的进程。智能汽车以汽车为载体,应用一系列高精尖信息化技术和智能化技术(传感器感知技术、V2X网联通讯技术、驾驶决策技术等),即代表了汽车技术产业化进程的重要方向,也是汽车技术创新发展的主流趋势。我国工信部把智能汽车定义为:搭载先进的车载传感器、控制器、执行器等装置,并融合现代通讯与网络技术,实现车与车、人、路、云等智能信息的交换、共享,具备复杂环境感知、智能决策、协同控制等功能,可实现安全、高效、舒适、节能行驶,并最终可实现代替人来操作的新一代汽车。

智能汽车中的智能化技术,可分为3个模块:环境感知层、决策规划层和运动控制层。环境感知层利用环境感知传感器(视觉传感器、激光雷达、毫米波雷达、超声波雷达、里程计、GPS等)感知车辆行驶环境信息,利用车辆自身状态传感器(如轮速检测等)感知车辆自身状态。经过智能化模型处理后,感知出车辆周围环境(如绝对位置、车道线、周围车辆相对位置、行人位置、动态静态障碍物类型和位置、行为预测等),决策规划层按照驾驶决策算法将空间、时间上的独立信息、互补信息和冗余信息进行理解,根据实时感知到的车辆周围环境信息,实时决策车辆可执行的驾驶指令并规划出行程轨迹。运动控制层接收决策规划层的驾驶指令,控制车辆稳定运行的同时保证车辆的控制精度。

随机性和模糊性导致不确定性是人类思维活动中最基本的特性。对人类思维模拟、研究的人工智能技术,也具有不确定性的特点。随着科学技术不断深入发展,需要学者们研究的变量越来越多,而且变量之间的关系也越来越复杂,对系统的判别和推理的精确性要求也越来越高。实践告诉我们:复杂的系统往往难以精确化。这就使得人们对系统精确性的需求和问题本身的复杂性之间形成矛盾。复杂性越高,有意义的精确化能力就越低,而复杂性意味着因素众多,使人们在求解这类复杂问题时,只能抓住问题的主要部分,忽略次要部分,而这又常常使本身明确的概念变得模糊起来,从而导致不确定性。

因人为主观因素导致的安全问题,往往通过政府部门健全法律、法规,引导、管理人工智能技术健康发展,本文将焦点放在因客观技术问题引起的安全问题方面。目前,智能车的安全性问题越来越受到社会重视。国务院于2017年发布的《新一代人工智能发展规划》中明确指出:“在大力发展人工智能的同时,必须高度重视可能带来的安全风险挑战,加强前瞻预防与约束引导,最大限度降低风险,确保人工智能安全、可靠、可控发展”[15]。基于上述原因,预期功能安全(safety of the intended functionality, SOTIF)的研究应运而生。预期功能安全在ISO/PAS 21448中首次给出定义[16],关注由功能不足或者由可合理预见的人员误用所导致的危害和风险。例如,传感系统在暴雨、积雪等天气情况下,传感器本身功能未发生故障,但智能车是否仍能按预期行驶。

本文总结智能汽车研究中的环境感知算法、智能决策算法、智能化算法的不确定性以及不确定性带来的安全问题等4个方面的研究情况,以期引起相关研究者的关注并提供指导。

-

智能汽车环境感知算法作为智能汽车规划决策和控制执行的基础环节,是智能汽车研究的关键技术之一,也是智能汽车当前研究的热点问题。本文对智能车中的环境感知算法进行综述,其次总结了当前决策规划层的研究情况。

-

1) 目标检测算法

目标检测的任务是找出图像或视频中的感兴趣物体,同时检测出它们的位置和大小,是机器视觉领域的核心问题之一,至今已有将近二十年的研究历史。作为计算机视觉的基本问题,目标检测构成了许多计算机视觉任务的基础,目前目标检测算法已广泛应用于许多现实世界的应用,如智能驾驶、机器人视觉、视频监控等。从2012年开始,因大数据技术和硬件计算能力的提升,卷积神经网络(convolutional neural networks, CNN)再一次受到研究者的关注,CNN提取到的特征和传统手工特征[17-21]相比,具有更鲁棒和更深层的特性,这也引导研究者将CNN应用到目标检测领域中。用深度学习解决目标检测算法,可被分为两组[22]:两阶段法和一阶段法。两阶段法采用“由粗到细”的检测策略,而一阶段法利用神经网络模型,一步完成检测任务。

R-CNN[23]是第一个将深度学习用于目标检测的网络,其训练过程展示了最初的两阶段法的思路:首先通过一组网络提取目标的候选框,然后每个候选框内的图像会缩放至相同尺度,并输入至一个CNN网络中提取特征,最后利用SVM[24]等分类器对每个区域的特征进行学习分类,完成检测识别。具有代表性的工作是R-CNN,其mAP(mean average precision)指标在VOC07数据集上从33.7%提升至58.5%。Fast R-CNN[25]改善了R-CNN计算冗余的问题,其在训练检测器时同时回归边框,mAP指标从58.5%进一步提升至70%,并且检测速度比R-CNN高近200倍,同时Fast R-CNN也进一步优化了两阶段法训练过程,将SVM分类器替换为softmax分类器,将分类过程和网络训练融合一体。

一阶段法对目标的检测更直接,一般采用端到端网络,有代表性的工作是文献[26]提出的YOLO(you only look once)网络,这是第一个采用深度学习方案的一阶段检测网络,尽管YOLO的检测速度非常快,但和两阶段法相比,其对目标位置的定位精度下降了,尤其是对一些小目标。后来作者继续在YOLO的基础上进行改进,提出了YOLO_v2[27]和YOLO_v3[28],进一步提升了检测精度,在位置定位精度上也有所考虑。SSD(single shot multibox detector[29]网络引入多引用和多分辨率检测技术,对小目标的检测精度提升明显。

2) 车道线检测算法

基于视觉的车道线检测技术已经广泛应用于辅助驾驶系统,如驾驶员熟悉的私家车车道保持功能,驾驶车辆时,如果车辆压在车道线上太久,则驾驶辅助系统会提醒驾驶员调整车辆位置。

近年来,基于深度学习的车道线检测算法被引入到智能驾驶领域,并表现出强大的检测性能。文献[30]使用一个8层的卷积神经网络训练端到端车道线检测模型。随着YOLO[26]等目标检测网络的精度和效率越来越好,研究者们逐渐把目标检测网络引入到车道线检测系统中。文献[31]搭建了用于在单次正向推断中执行车道线检测的CNN模型,该模型用于回归车道线两个端点及其深度。然而,上述方法在车道线占比过小、遮挡等条件下检测性能并不理想,研究者们借鉴传统车道线检测算法在消影点、车道形状、轨迹等领域的研究成果[32],并将这类方法和神经网络结合起来,一定程度上抑制了漏检和误检问题。文献[33]提出用15个点对车道线进行建模,以避免车道线边界检测不准确。从人类感知车道环境的经验中得到启发,文献[34]设计了多任务网络来检测车道线消影点,提高了检测精度。文献[35]设计了特征图逐层卷积网络,在CNN框架中加入像素之间的约束,学习车道线的结构信息。

3) 目标跟踪算法

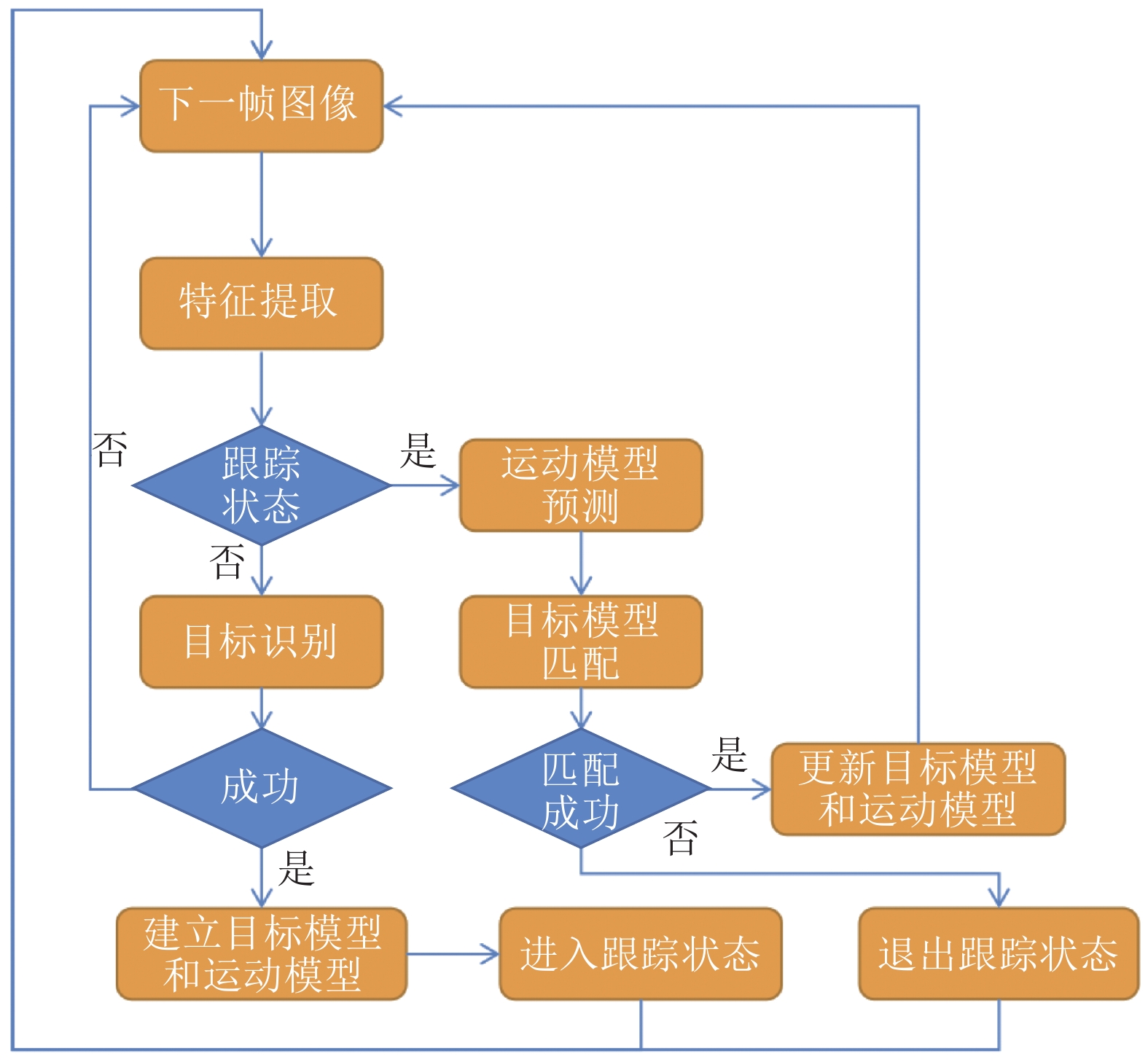

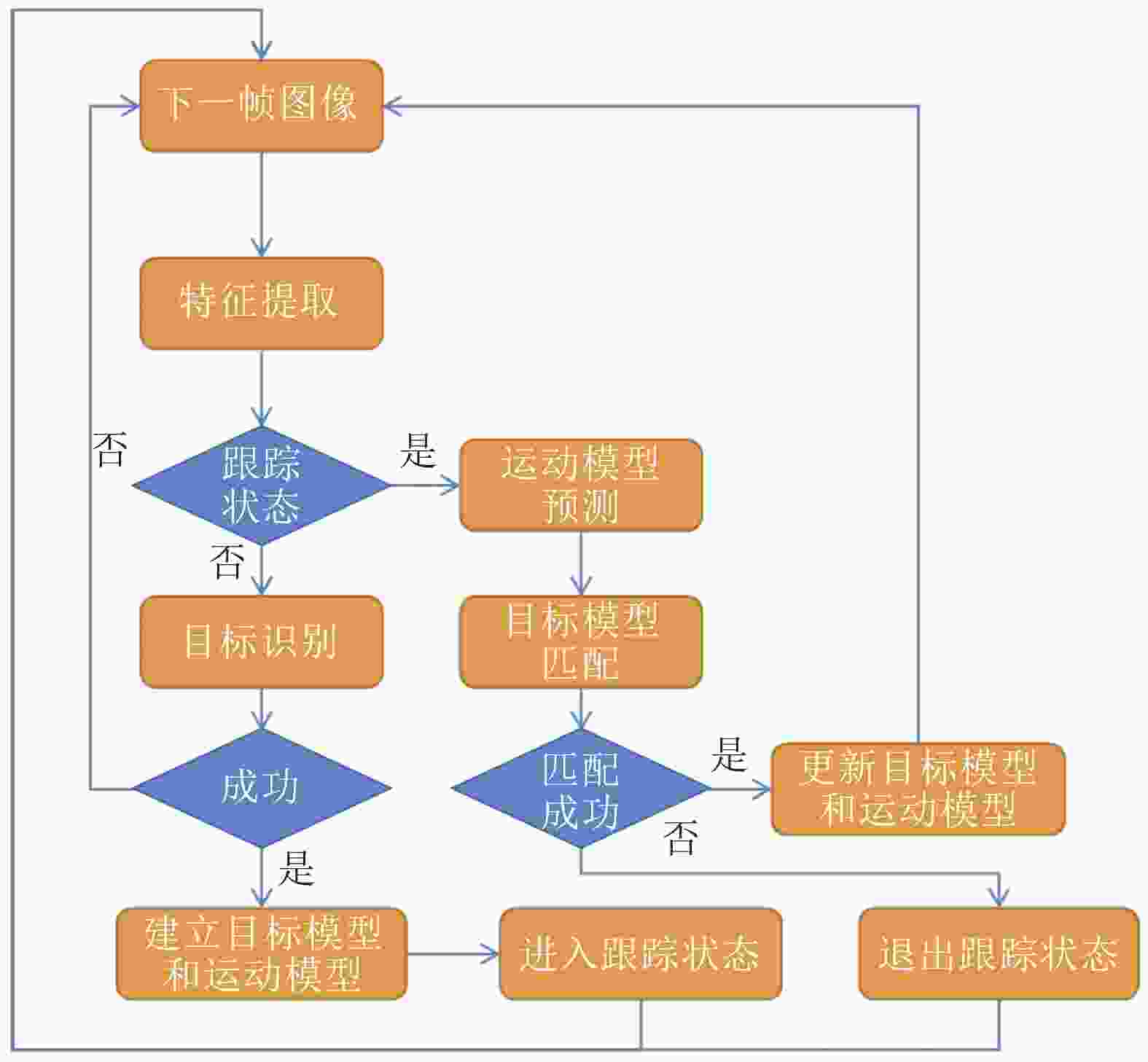

视觉跟踪通过在连续的视频图像序列中估计感兴趣目标的位置或所在区域,结合历史运动信息,预测其未来的运动信息,对智能车分析理解其周围环境至关重要。在线视觉跟踪的基本框图如图1所示。

近年来,神经网络技术借助其优秀的特征建模能力,在目标跟踪领域取得了巨大成功。基于滤波的目标跟踪受关注最多,其算法核心思想是将待跟踪目标的模板与待搜索区域(候选区域)内的图像进行相似度匹配,匹配度最高的位置即为跟踪到的目标图像块。经深度神经网络提取到的特征和传统特征相比具有更鲁棒的表征能力,但是由于网络的复杂性,往往无法实时提取图像特征,因此基于滤波的深度神经网络具有实用的研究价值。文献[36]设计了多层卷积网络用于视觉跟踪,认为深层特征具有良好的语义表述能力,但其空间位置信息较弱,而浅层特征恰好弥补了这一不足,其主要思路是将不同层特征分别用于训练滤波器,最终在定位目标位置时,将待搜索区域的滤波器响应加权形成响应图像,最终按响应最大值区域作为目标所在位置。和文献[36]的多层网络处理方式类似,文献[37]提出强化深度跟踪算法,采用了6层网络特征代替文献[36]中的3层特征,同时将固定权重优化为自适应学习权重。

神经网络除了应用于特征提取和滤波计算,还可以作为辅助网络提取图像中的显著性区域。文献[38]提出了基于循环神经网络(recurrent neural networks, RNN)的目标强化跟踪算法,使用RNN提取显著性图,将其和滤波器系数相融合,以增强滤波器对于背景干扰信息的抑制能力。也有文献将注意力机制与滤波网络相融合,提出了注意力机制相关滤波网络(attentional correlation filter network, AFCN),利用注意力机制对图像的特征和滤波器进行选择,判断区域的可靠部分,进而引导下一帧图像中的目标定位[39]。

4) 行为预测算法

行为预测功能会根据当前以及历史感知来预测智能车周围其他运动物体(如其他车辆、行人、非机动车等)的未来运动轨迹。为使智能车在道路上安全有效地行驶,智能汽车不仅应感知其周围其他运动元素的状态,还应主动预测其未来的运动轨迹,有助于智能车提前做出最优决策。机器学习尤其是深度学习的最新进展为解决智能车行为预测提供了有力工具。一般地,行为预测算法可以划分为如下3类解决方案:基于循环神经网络的解决方案、基于卷积神经网络(convolutional neural networks, CNN)的解决方案以及其他方案。文献[40]使用一组LSTMS来建模个体车辆的轨迹;另一组用来建模对交互的作用。文献[41-43]提出了多层长短期记忆网络(long short-term memory, LSTM)用于序列分类器。文献[44]提出用于估计加速度的两层LSTM网络。文献[45]提出多个RNN网络,一组LSTM网络用于建模个体车辆的轨迹,另一组网络用于建立当前智能车与其他元素的交互模型。在卷积神经网络解决方案里,文献[46]提出一个包含卷积层和全连接层的6层网络,用于预测周围车辆的意图。文献[47-48]使用MobileNet V2[49]作为特征提取器,提取到的特征用来预测周围车辆意图。文献[50]先利用两个骨干CNN网络来提取激光雷达和栅格化地图的特征,然后将3个不同的网络分别生成检测、意图和轨迹模型。也有学者把RNN网络和CNN网络相结合用于行为预测。文献[51]从CNN中提取图像空间特征,将特征输入到LSTM中,最后馈送至反卷积网络,输出和原始输入同大小的预测图。

5) 导航与定位

除了上面讨论的感知信息外,智能车要实现全局路径规划,还需要通过定位系统精准的知道自身在全局环境中的位置。即时定位与建图(simultaneous localization and mapping, SLAM)技术借助视觉传感器、惯性测量单元(inertial measurement unit, IMU)等传感器设备,在智能车驾驶的过程中完成全局地图的构建,同时定位出自身在全局地图中的位置。总结SLAM的研究历史,其研究思路可以归纳为两类:基于扩展卡尔曼滤波的SLAM框架[52-53]以及基于图优化的SLAM框架[54-58]。

扩展卡尔曼滤波算法是卡尔曼滤波算法在非线性系统下的推广,基于扩展卡尔曼滤波的SLAM框架较简单,且较容易适配多传感器融合,因此这类SLAM框架运算效率一般都较快,但由于其相机参数是依靠滤波估计得出的,故无法得到精确、平滑的相机姿态。基于图优化的SLAM框架避免了借助图优化理论,前端完成相机跟踪,确定定位任务,后端实现建图与地图优化,后端一般会将相机姿态和地图优化至局部或全局最优处,因而基于图优化的SLAM跟踪到的相机轨迹会比较平滑,地图也更精细。PTAM[54]是第一个可以接近实时运行的图优化SLAM框架,其基本思想是前端利用跟踪算法完成相机的位姿跟踪,当前端触发关键帧(key frame)生成条件时,激活后端建图任务。ORB-SLAM将前端跟踪算法替换为ORB描述符[20],并提供了可以商业化的开源代码[59],不过由于ORB-SLAM使用的是稀疏特征点,建立的地图也是稀疏的。SVO[57]、DSO[56]为半稠密地图、稠密地图提供了解决方案。为了适应一些复杂环境,多传感器融合也经常应用到SLAM中,视觉传感器和IMU组合使用可以解决纯视觉SLAM在估计深度时容易出现误差的问题。VINS[58]成功的融合了视觉和IMU,能够长时在户外建立大规模地图。

回环检测用于判断智能车是否曾经到访过当前所处的位置。在智能车三维重建周围环境的过程中,由于累计数值误差的存在,导致尺度漂移是SLAM至今为止都未完全攻克的问题。闭环检测可以减弱累计误差对整个三维重建过程的影响,增加收敛性。在传统机器学习时代,大量的方法使用词袋模型[60](bag of words, BoW)实现闭环检测。近年来,以深度学习为代表的方法得到很多研究者的关注,这类方法以图像检索的思路解决回环检测任务,文献[61]首次用神经网络从视觉地点识别的角度建立网络模型。受BoW和神经网络启发,NetVLAD[62]利用网络学习类别中心残差系数,大幅提升了检索性能,研究视觉地点识别的还有文献[63-66]。

-

决策算法设计的目的是在驾驶决策过程中引入高级认知能力,进而能驾驭复杂驾驶环境,其研究可以追溯至20世纪80年代,智能车决策算法可以分为3类:基于行为认知的方法、基于数据挖掘的方法和基于机器学习的方法。

基于行为认知的决策方法模仿、加工人类对事物的认知和抽象能力,形成可供智能车识别、执行的决策规则。研究思路是根据交通法律法规、人类驾驶经验建立驾驶决策规则库,在不同的驾驶环境下,按照规则库中的逻辑确定车辆驾驶行为[67]。这种方法在封闭道路环境下能够很好的完成决策任务,但智能车面临的驾驶环境是开放的,往往无法预估,因此这种方法在实际研究中应用有限。普林斯顿大学利用知觉匹配来设计智能决策算法,借助深度学习技术,将输入图像和关键的知觉指标进行匹配,通过基于车辆动力学模型决策驾驶行为。卡耐基梅隆大学在设计决策算法时采用了行为推理的方式[68]。文献[69-70]使用贝叶斯方法对驾驶员数据进行分析,使车辆能够在车道数减少时对车流汇入情形下的车辆意图进行预测。

基于数据挖掘的决策方法利用大数据技术对海量数据进行清洗、分析、特征提取、挖掘数据背后的价值内容(如驾驶经验、判断知识等),并将挖掘到的内容形式化成智能车可以执行的决策指令。这类方法对数据规模依赖较大,研究中往往数据不足成为影响决策算法性能的瓶颈。文献[71]建立了用于预测换道时的决策行为的隐马尔科夫模型。

基于机器学习的决策方法是目前研究的热点,这类方法借助人工智能技术,从人类思维的源头出发模拟人类,借助强大的硬件处理能力以及神经网络自学习能力,通过在实际驾驶环境或仿真驾驶环境中的大量训练,使智能汽车自主的学习到正确的驾驶决策指令。北京理工大学构建了换道规则数据库,该工作首先进行人工数据标定,再利用神经网络学习利用规则数据库换道规则。该研究成果在比亚迪自动驾驶平台上验证测试,测试结果和人类驾驶员的驾驶决策相比,相似度高达80.4%[72]。工业界也在智能决策领域做了大量的工作,科技巨头如谷歌、百度、小鹏、Drive.ai等企业用人工智能技术来解决决策问题,一般采用端到端的方式,从环境感知开始,使用深度学习模型直接对智能车行为进行决策,以期实现用驾驶脑代替人类大脑。

-

霍金曾指出不确定性是我们在其中生活的宇宙的一个基本特征[73]。随着智能技术研究的深入,智能技术的研究对象—人类智能的不可形式化形象思维成为智能技术发展的瓶颈。尽管当前的计算机软硬件技术拥有强大的计算、存储和搜索能力,但对于非形式化问题却难以下手。在人工智能技术的研究过程中,考虑确定性因素对真实世界进行模拟,抓住主要部分,忽略次要部分。因此,在智能技术的研究中,考虑真实世界的不确定性因素,是模拟、延伸人类智能必不可少的环节。

从1956年的达特茅斯会议上“人工智能”正式被提出以来[74],发展仅有60余年的历史。学者们往往将人工智能技术与哲学、脑科学、计算机技术、心理学等学科联系在一起研究,这也导致了人工智能算法的不确定性反应在诸多方面。如从哲学的角度推理人工智能算法的认知不确定性问题[75],从脑科学的角度推理人工智能的内在生成机制的不确定性问题[76]。本文由于篇幅有限,且将讨论聚焦在计算机技术角度,即主要讨论人工智能技术研究的不确定性问题。

1) 信息获取的不确定性

尽管人类利用多种感觉器官(如视觉、听觉、嗅觉、触觉等)获取信息,然而仍然不能把握全部信息。Shannon信息论指出:信息是消除不确定性的东西[77]。但是在人类主体筛选信息的过程中已经出现了不确定性。在智能车中,主流的信息获取依靠各类传感器来模拟人类感官,如视觉摄像头模拟人眼,麦克风和扬声器分别模拟耳朵和嘴巴。各种传感器的设计和人类主体感觉器官之间又进一步增大了信息获取的不确定性。

2) 信息理解的不确定性

信息理解是人类大脑对信息的加工和理解过程。尽管在信息获取阶段已经引入不确定性信息,但这部分信息对于人工智能算法依然足够丰富。在人工智能算法的研究过程中,由于原始特征和存储能力、计算能力之间的矛盾,研究者们往往会对信息进行加工,提取最具代表原始信息的特征。如利用智能算法取得巨大成功的图像识别领域,往往不会利用原始图像作为特征进行评比,而是对原始图像进行加工、处理;主成分分析(principal component analysis, PCA)也是信息加工常用的技术手段[78]。这种智能算法中的常见技术手段也会引入不确定性。

3) 主体决策的不确定性

在从信息获取、理解到智能决策的过程中,主体往往从多个方案中选择一个最优的,从形式上看,这是一个概率问题,必然伴随着不确定性。智能策略的生成离不开对问题的充分分析和对环境信息的充分理解,同一智能主体在不同时刻、不同环境的情况下,可能做出迥异的决策。

-

随着驾驶辅助系统(advanced driver assistance systems, ADAS)越来越复杂,尽管引入的各种复杂传感器(如激光雷达、超声波雷达)在一定程度上弥补了信息获取的不确定性,但是却增加了信息理解的难度,导致智能车的预期功能在某些情况下无法达到要求。2018年3月,Uber自动驾驶汽车在美国意外撞击致死一名行人,在所有传感系统正常工作的情形下,行人被识别成未知物体,导致悲剧发生。基于上述背景,预期功能安全应运而生。

-

预期功能安全的定义首次在2015年被提出,ISO/PAS 21448标准将预期功能安全定义为:由功能不足、或由可合理预见的人员误用所导致的危害和风险[16]。例如,传感系统在暴雨、积雪等天气情况下,本身并未发生故障,但是否仍能执行预期功能。

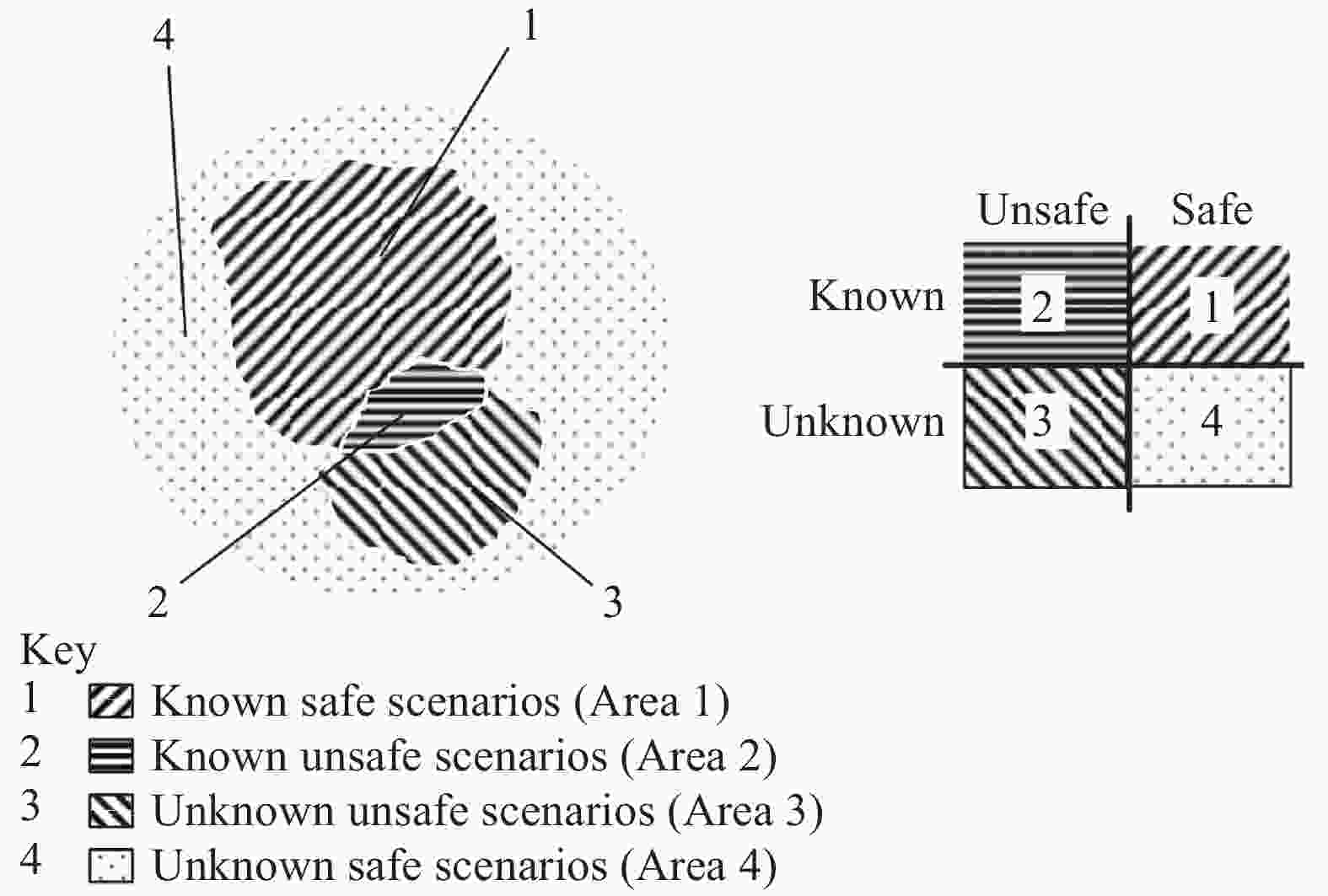

预期功能安全基于场景来进行分析,ISO/PAS 21448标准将场景划分为如图2所示的4个区间,分别为:1)已知−安全场景;2)已知−危险场景;3)未知−危险场景;4)未知−安全场景。预期功能安全研究的目的是将已知危险区域和未知危险区域缩小至可接收的范围内,即保证场景尽可能控制在安全区域。

-

当前对智能驾驶汽车预期功能的安全研究尚处于起步阶段。ISO/PAS 21448规范了预期功能安全的基本实现思路和流程[16],为预期功能安全的研究工作提供了指导性研究思路。

研究者在场景的理解领域做了大量工作。文献[79]把物理对象的空间−时间排列当做场景。文献[80]认为场景包含环境的动态元素以及一些指定的驾驶指令,用场景片段的思路分析预期功能安全问题,场景的起点是前一场景的终点。文献[81]提出场景树的思想,将场景分解为简单元素并用树状结构排列。文献[82]总结了前人的研究成果,将环境的快照信息,如动态元素(如车、人等)、静态元素(建筑、风景等)以及这些实体之间的关系定义为场景。

除了对场景理解的研究工作外,文献[83]梳理了信息安全、功能安全和预期功能安全三者之间的联系和区别。文献[84]报告了功能安全和预期功能安全之间的关系。文献[85]深入分析了预期功能安全研究的挑战,并提出了相应的风险评估框架。

在预期功能理论和定性定量方面也得到了学者的关注。博世公司借助V模型把预期功能安全用于ADAS系统的开发过程,并利用性能故障树分析DA/AD系统[86]。TNO融合场景和数据驱动,提出了用于构建和维护真实场景数据库的StreetWise方法,为智能驾驶提供了真实的测试场景用例[87]。文献[88]为预期功能安全测试提供了参考,提出了一些新的贝叶斯停止规则。文献[89]将信息论中熵的概念引入到预期功能安全研究中,引入安全熵的概念,提出基于安全熵的预期功能安全度的量化分析方法。

-

MIT 认为自动驾驶应该分为两个等级:人机共驾(shared autonomy)以及全自动驾驶(full autonomy)[90]。这样的分类方式为研究者提供了指导方针,在研究过程中添加必要的限制条件有助于研究工作的顺利开展。完全自动驾驶无人的智能汽车要解决预期功能的安全问题,该时机尚未成熟,因此人机共驾是一个和现实研究情况很契合的路线。

在传统汽车驾驶中,人作为控制车辆的主体,具有高度的智能性,智能汽车的目标是要用计算机代替传统的驾驶员。然而在智能算法的研究进程中,从目前的研究进展看,无论是采用何种传感器、算法多么鲁棒,都无法100%弥补智能算法的不确定性因素,如果在智能车行驶的极端条件下辅以人类智慧,利用人类智慧弥补智能算法的不确定性,是值得关注的研究方向。

人机共驾通常被分为3个层次:信息感知层的人机交互、规划决策层的人机协同以及执行控制层的人机交互[91]。信息感知层人机交互主要依靠人的感官系统对智能车的感知器进行补充,从而弥补智能算法信息获取的不确定性问题。规划决策层的人机协同弥补了信息理解和主体决策的不确定性,由于智能车系统是非线性系统,智能算法求解的绝大多数最优解仅仅是局部最优解,辅以人类决策,如转弯、速度控制等。执行控制层人机交互则是借助人类经验和智能控制算法对智能汽车进行精确控制。

-

本文首先从智能感知、智能决策两个角度总结了智能算法在智能汽车领域的应用和研究进展。其次,还从信息获取、信息理解、智能决策3个角度分析了智能算法研究的不确定性特性,并介绍了解决因智能算法不确定性带来的安全问题的研究方法—预期功能安全,并总结了预期功能安全的研究情况,最后总结了用于实现预期功能安全的人机共驾方案的研究情况。

A Survey: Artificial Intelligence and its Security in Intelligent Vehicle

-

摘要: 随着人工智能(AI)技术的发展,以智能驾驶汽车、智能机器人为代表的智能系统逐渐代替或辅助人类从事各种场景中简单或复杂的工作。该文从智能汽车中的智能算法出发,总结了在智能汽车中人工智能感知算法、决策算法的研究进展;讨论了智能算法的不确定性问题;并从智能算法的不确定性带来的安全问题角度,讨论了预期功能安全的意义及发展,最后讨论了人机共驾对当前智能驾驶汽车解决预期功能安全的必要性。Abstract: With the development of artificial intelligence (AI) technology, intelligent systems, such as intelligent driving cars and intelligent robots, gradually replace or assist human beings to do simple or complex work in various scenes. Starting from the intelligent algorithm in intelligent vehicle, this paper summarizes the research progress of artificial intelligence perception algorithm and decision algorithm in intelligent vehicle. Secondly, the uncertainty of intelligent algorithm is discussed. Finally, from the point of view of the security problems brought by the uncertainty of the intelligent algorithm, this paper discusses the significance and development of the expected functional security, and discusses the necessity of human-computer co driving to solve the expected functional security of the current intelligent driving vehicle.

-

Key words:

- AI /

- human-computer co-driving /

- intelligent driving /

- SOTIF /

- statistical pattern recognition

-

[1] 常周林, 袁婷. 人工智能在智能机器人系统中的应用研究[J]. 科技创新导报, 2016, 13(23): 10. CHANG Zhou-lin, YUAN Ting. Application of artificial intelligence in intelligent robot system[J]. Science and Technology Innovation Herald, 2016, 13(23): 10. [2] ZHANG Y, WILLIAM C, NAVDEEP J. Very deep convolutional networks for end-to-end speech recognition[C]//IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP). [S. l.]: IEEE, 2017: 4845-4849. [3] SAINATH T N, MOHAMED A R, BRIAN K, et al. Deep convolutional neural networks for LVCSR[C]//IEEE International Conference on Acoustics, Speech and Signal Processing. [S. l.]: IEEE, 2013: 8614-8618. [4] ALEX K, SUTSKEVER I, GEOFFREY E H. Imagenet classification with deep convolutional neural networks[C]//Advances in Neural Information Processing Systems. [S. l.]: IEEE, 2012: 1097-1105. [5] KAREN S, ANDREW Z. Very deep convolutional networks for large-scale image recognition[C]//The 3rd International Conference on Learning Representations. [S.l.]: [s.n.], 2015:1884-2020. [6] HE Kai-ming, ZHANG Xiang-yu, REN Shao-qing, et al. Deep residual learning for image recognition[C]//Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition. [S. l.]: IEEE, 2016: 770-778. [7] CHRISTIAN S, LIU Wei, JIA Yang-qing, et al. Going deeper with convolutions[C]//Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition. [S. l.]: IEEE, 2015: 1-9. [8] ZHANG Wei-nan, ZHU Qing-fu, WANG Yi-fa, et al. Neural personalized response generation as domain adaptation[J]. World Wide Web, 2019(4): 1427-1446. [9] TAO Chong-yang, MOU Li-li, ZHAO Dong-yan, et al. Ruber: An unsupervised method for automatic evaluation of open-domain dialog systems[EB/OL]. [2018-11-12]. https://arxiv.org/abs/1701.03079. [10] 志刚. 什么是人工智能[J]. 大众科学, 2018(1): 44-45. ZHI Gang. What is AI[J]. China Public Science, 2018(1): 44-45. [11] 王家祺, 王赛. 人工智能技术的发展趋势探讨[J]. 通讯世界, 2017(16): 1006-4222. WANG Jia-qi, WANG Sai. Discussion on the development trend of artificial intelligence technology[J]. Telecom World, 2017(16): 1006-4222. [12] 崔雍浩, 商聪, 陈锶奇. 人工智能综述: AI的发展[J]. 无线电通信技术, 2019, 45(3): 5-11. CUI Yong-hao, SHANG Cong, CHEN Tian-qi. A review of artificial intelligence: The development of AI[J]. Radio Communication Technology, 2019, 45(3): 5-11. [13] 韩晔彤. 人工智能技术发展及应用研究综述[J]. 电子制作, 2016, DOI: 10.3969/j.issn.1006-5059.2016.12.082. HAN Ye-tong. Development and application of artificial intelligence technology[J]. Electronic Production, 2016, DOI: 10.3969/j.issn.1006-5059.2016.12.082. [14] 李开复, 王咏刚. 到底什么是人工智能[J]. 科学大观园, 2018(2): 48-49. LI Kai-fu, WANG Yong-gang. What is artificial intelligence[J]. Science Grand View Park, 2018(2): 48-49. [15] 中国国务院. 新一代人工智能发展规划[J]. 科技导报, 2017(17): 113. State Council of China. New generation artificial intelligence development plan[J]. Science & Technology Review, 2017(17): 113. [16] LI Bo. Road vehicles-Safety of the intended functionality[J]. China Auto, 2019(4): 20-22. [17] DALAL N, Bill T. Histograms of oriented gradients for human detection[C]//In 2005 IEEE Computer Society Conference on Computer Vision and Pattern Recognition (CVPR). [S.l.]: IEEE, 2005: 886-893. [18] LOWE D G. Distinctive image features from scale-invariant keypoints[J]. International Journal of Computer Vision, 2004(2): 91-110. [19] BAY H, TINNE T, LUC V G. Surf: Speeded up robust features[C]//European Conference on Computer Vision. [S. l.]: Springer, 2006: 404-417. [20] RUBLEE E, VINCENT R, KURT K, et al. ORB: An efficient alternative to SIFT or SURF[C]//International Conference on Computer Vision. [S.l.]: IEEE, 2011: 2564-2571. [21] CALONDER M, VINCENT L, CHRISTOPH S, et al. Brief: Binary robust independent elementary features[C]// European Conference on Computer Vision. [S.l.]: Springer, 2010: 778-792. [22] ZOU Zheng-xia, SHI Zhen-wei, GUO Yu-hong, et al. Object detection in 20 years: A survey[EB/OL]. [2019-10-25]. https://arxiv.org/abs/1905.05055v2. [23] ROSS G, JEFF D, TREVOR D, et al. Rich feature hierarchies for accurate object detection and semantic segmentation[C]//Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition. [S.l.]: IEEE, 2014: 580-587. [24] SUYKENS J A K, JOOS V. Least squares support vector machine classifiers[J]. Neural Processing Letters, 1999(3): 293-300. [25] ROSS G. Fast R-CNN[C]//Proceedings of the IEEE International Conference on Computer Vision. [S.l.]: IEEE, 2015: 1440-1448. [26] REDMON J, SANTOSH D, ROSS G, et al. You only look once: Unified, real-time object detection[C]//Proceedings of the IEEE Conference on Computer Vision and pattern Recognition. [S.l.]: IEEE, 2016: 779-788. [27] REDMON J, ALI F. YOLO9000: Better, faster, stronger[C]//Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition. [S.l.]: IEEE, 2017: 7263-7271. [28] REDMON J, ALI F Yolov3: An incremental improvement[R]. Washington: University of Washington, 2018. [29] LIU W, DRAGOMIR A, DUMITRU E, et al. Ssd: Single shot multibox detector[C]//European Conference on Computer Vision. [S.l.]: Springer, 2016: 21-37. [30] KIM J, MINHO L. Robust lane detection based on convolutional neural network and random sample consensus[C]//International Conference on Neural Information Processing. [S.l.]: Springer, 2014: 454-461. [31] HUVAL B, WANG T, SAMEEP T, et al. An empirical evaluation of deep learning on highway driving[EB/OL]. [2019-11-10]. https://arxiv.org/abs/1504.01716. [32] 方睿. 基于视觉的车道线检测技术综述[J]. 内江科技, 2018(7): 41-42. FANG Rui. Overview of vision based lane detection technology[J]. Neijiang Technology, 2018(7): 41-42. [33] CHOUGULE S, NORA K, ASAD I, et al. Reliable multilane detection and classification by utilizing CNN as a regression network[C]//Proceedings of the European Conference on Computer Vision. [S.l.]: Springer, 2018: 740-752. [34] LEE S, JUNSIK K, JAE S Y, et al. Vpgnet: Vanishing point guided network for lane and road marking detection and recognition[C]//Proceedings of the IEEE International Conference on Computer Vision. [S.l.]: IEEE, 2017: 1947-1955. [35] PAN Xin-gang, SHI Jian-ping, LUO Ping, et al. Spatial as deep: Spatial cnn for traffic scene understanding[EB/OL]. [2019-11-27]. https://arxiv.org/abs/1712.06080. [36] MA Chao, HUANG Jia-Bin, YANG Xiao-kang, et al. Hierarchical convolutional features for visual tracking[C]//Proceedings of the IEEE International Conference on Computer Vision. [S.l.]: IEEE, 2015: 3074-3082. [37] QI Yuan-kai, ZHANG Sheng-ping, LEI Qin, et al. Hedged deep tracking[C]//Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition. [S.l.]: IEEE, 2016: 4303-4311. [38] GLADH S, MARTIN D, FAHAD S K, et al. Deep motion features for visual tracking[C]//The 23rd International Conference on Pattern Recognition. [S.l.]: IEEE, 2016: 1243-1248. [39] CHOI J, CHANG H J, YUN S, et al. Attentional correlation filter network for adaptive visual tracking[C]//Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition. [S. l.]: IEEE, 2017: 4807-4816. [40] Dai S, LI L, LI Z. Modeling vehicle interactions via modifified LSTM models for trajectory prediction[J]. IEEE Acess, 2019, 7: 287-296. [41] ALEX Zyner, STEWART W, EDUARDO N. A recurrent neural network solution for predicting driver intention at unsignalized intersections[J]. IEEE Robotics and Automation Letters, 2018(3): 1759-1764. [42] ALEX Z, STEWART W, JAMES W, et al. Long short term memory for driver intent prediction[C]//2017 IEEE Intelligent Vehicles Symposium (IV). [S.l.]: IEEE, 2017: 1484-1489. [43] PHILLIPS D J, WHEELER T A, MYKEL J K. Generalizable intention prediction of human drivers at intersections[C]//2017 IEEE Intelligent Vehicles Symposium (IV). [S. l.]: IEEE, 2017: 1665-1670. [44] DING W C, SHEN S J. Online vehicle trajectory prediction using policy anticipation network and optimization-based context reasoning[C]//2019 International Conference on Robotics and Automation (ICRA). [S.l.]: IEEE, 2019: 9610-9616. [45] DAI S Z, LI L, LI Z H. Modeling vehicle interactions via modified LSTM models for trajectory prediction[J]. IEEE Access, 2019(7): 38287-38296. [46] LEE D, KWON Y P, SARA M, et al. Convolution neural network-based lane change intention prediction of surrounding vehicles for ACC[C]//2017 IEEE 20th International Conference on Intelligent Transportation Systems (ITSC). [S.l.]: IEEE, 2017: 1-6. [47] CUI H G, VLADAN R, CHOU F C, et al. Multimodal trajectory predictions for autonomous driving using deep convolutional networks[C]//2019 International Conference on Robotics and Automation (ICRA). [S.l.]: IEEE, 2019: 2090-2096. [48] DJURIC N, VLADAN R, CUI H G, et al. Motion prediction of traffic actors for autonomous driving using deep convolutional networks[EB/OL]. [2019-10-20]. https://arxiv.org/abs/1808.05819v1. [49] SANDLER M, ANDREW H, ZHU M L, et al. Mobilenetv2: Inverted residuals and linear bottlenecks[C]//Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition. [S.l.]: IEEE, 2018: 4510-4520. [50] CASAS S, LUO W J, RAQUEL U. Intentnet: Learning to predict intention from raw sensor data[C]//Conference on Robot Learning. [S.l.]: [s. n.], 2018: 947-956. [51] SCHREIBER M, STEFAN H, KLAUS D. Long-term occupancy grid prediction using recurrent neural networks[C]//2019 International Conference on Robotics and Automation (ICRA). [S. l.]: IEEE, 2019: 9299-9305. [52] HUANG S D, GAMINI D. Convergence and consistency analysis for extended Kalman filter based SLAM[J]. IEEE Transactions on Robotics, 2007, 23(5): 1036-1049. doi: 10.1109/TRO.2007.903811 [53] LI Jian, LI Qing, CHENG Nong. A combined visual-inertial navigation system of MSCKF and EKF-SLAM[C]//2018 IEEE CSAA Guidance, Navigation and Control Conference (CGNCC). [S.l.]: IEEE, 2018: 1-6. [54] KLEIN G, DAVID M. Parallel tracking and mapping for small AR workspaces[C]//2007 6th IEEE and ACM International Symposium on Mixed and Augmented Reality. [S.l.]: IEEE, 2007: 225-234. [55] RAUL M A, JOSE M M M, TARDOS J D. ORB-SLAM: A versatile and accurate monocular SLAM system[J]. IEEE Transactions on Robotics, 2015, 31(5): 1147-1163. doi: 10.1109/TRO.2015.2463671 [56] ENGEL J, KOLTUN V, CREMERS D. Direct sparse odometry[J]. IEEE Transactions on Pattern Analysis and Machine Intelligence, 2017, 40(3): 611-625. [57] ENGEL J, JURGEN S, DANIEL C. Semi-dense visual odometry for a monocular camera[C]//Proceedings of the IEEE International Conference on Computer Vision. [S.l.]: IEEE, 2013: 1449-1456. [58] QIN T, LI P L, SHEN S J. Vins-mono: A robust and versatile monocular visual-inertial state estimator[J]. IEEE Transactions on Robotics, 2018, 34(4): 1004-1020. doi: 10.1109/TRO.2018.2853729 [59] RAÚL M A. ORB_SLAM open source[EB/OL]. [2019-11-10]. http://webdiis.unizar.es/~raulmur/orbslam/. [60] LI T, MEI T, KWEON I S, et al. Contextual bag-of-words for visual categorization[J]. IEEE Transactions on Circuits and Systems for Video Technology, 2010, 21(4): 381-392. [61] CHEN Z T, OBADIAH L, ADAM J, et al. Convolutional neural network-based place recognition[EB/OL]. [2019-11-22]. https://arxiv.org/abs/1411.1509. [62] RELJA A, PETR G, AKIHIKO T, et al. NetVLAD: CNN architecture for weakly supervised place recognition[C]//Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition. [S.l.]: IEEE, 2016: 5297-5307. [63] KIM H J, ENRIQUE D, FRAHM J M. Learned contextual feature reweighting for image geo-localization[C]//2017 IEEE Conference on Computer Vision and Pattern Recognition (CVPR). [S.l.]: IEEE, 2017: 3251-3260. [64] ZHU Y Y, WANG J, XIE L X, et al. Attention-based pyramid aggregation network for visual place recognition[C]//Proceedings of the 26th ACM International Conference on Multimedia. [S.l.]: ACM, 2018: 99-107. [65] LIU L, LI H D, DAI Y C. Deep stochastic attraction and repulsion embedding for image based localization[EB/OL]. [2019-11-12]. https://arxiv.org/abs/1808.08779v2. [66] LOWRY S, NIKO S, PAUL N, et al. Visual place recognition: A survey[J]. IEEE Transactions on Robotics, 2015, 32(1): 1-19. [67] DANIL P. Computational intelligence in automotive applications[M]. [S.l.]: Springer, 2008. [68] BALAKIRSKY S B. A framework for planning with incrementally created graphs in attributed problem spaces[M]. [S.l.]: IOS Press, 2003. [69] KUMAR P, MATHIAS P, STÉPHANIE L, et al. Learning-based approach for online lane change intention prediction[C]//2013 IEEE Intelligent Vehicles Symposium (IV). [S.l.]: IEEE, 2013: 797-802. [70] YAMADA K, HIROSHI M, KAZUHIRO U. A method for analyzing interaction of driver intention through vehicle behavior when merging[C]//2014 IEEE Intelligent Vehicles Symposium Proceedings. [S.l.]: IEEE, 2014: 158-163. [71] NISHIWAKI Y, CHIYOMI M, NORIHIDE K, et al. Generating lane-change trajectories of individual drivers[C]//2008 IEEE International Conference on Vehicular Electronics and Safety. [S.l.]: IEEE, 2008: 271-275. [72] 袁盛玥. 自动驾驶车辆城区道路环境换道行为决策方法研究[D]. 北京: 北京理工大学, 2016. YUAN Sheng-yue. Research on the decision-making method of road environment changing behavior of autonomous vehicles in urban areas[D]. Beijing: Beijing University of Technology, 2016. [73] STEPHEN H. A brief history of time: From big bang to black holes[M]. [S. l.]: Random House, 2009. [74] WANG Yan-peng, HAN Tao, WANG Xue-zhao. The development trend of artificial intelligence in the group 20[J]. Science Focus, 2019, 14(1): 20-32. [75] 张昕. 人工智能中的不确定性问题研究[D]. 湖南: 国防科学技术大学, 2012. ZHANG Xin. Research on uncertainty in artificial intelligence[D]. HuNan: National University of Defense Science and Technology, 2012. [76] 骆清铭. 脑空间信息学—连接脑科学与类脑人工智能的桥梁[J]. 中国科学: 生命科学, 2017(47): 1015-1024. LUO Qing-ming. Brain spatial informatics-a bridge between brain science and brain like artificial intelligence[J]. Chinese Science: Life Science, 2017(47): 1015-1024. [77] SERGIO V. Fifty years of Shannon theory[J]. IEEE Transactions on Information Theory, 1998, 44(6): 2057-2078. doi: 10.1109/18.720531 [78] SVANTE W, KIM E, PAUL G. Principal component analysis[J]. Chemometrics and Intelligent Laboratory Systems, 1987(2): 37-52. [79] MAURER Markus. EMS-vision: Knowledge representation for flexible automation of land vehicles[C]//Proceedings of the IEEE Intelligent Vehicles Symposium. [S. l.]: IEEE, 2000: 575-580. [80] GEYER S, MARCEL B, BENJAMIN F, et al. Concept and development of a unified ontology for generating test and use-case catalogues for assisted and automated vehicle guidance[J]. IET Intelligent Transport Systems, 2013, 8(3): 183-189. [81] THOMASON M G, Gonzalez R C. Data structures and databases in digital scene analysis[C]//Advances in Information Systems Science. Boston: Springer, 1985: 1-47. [82] ULBRICH S, TILL M, ANDREAS R, et al. Defining and substantiating the terms scene, situation, and scenario for automated driving[C]//2015 IEEE 18th International Conference on Intelligent Transportation Systems. [S.l.]: IEEE, 2015: 982-988. [83] 毛向阳, 尚世亮, 崔海峰. 自动驾驶汽车安全影响因素分析与应对措施研究[J]. 上海汽车, 2018(1): 33-37. doi: 10.3969/j.issn.1007-4554.2018.01.08 MAO Xiang-yang, SHANG Shi-liang, CUI Hai-feng. Auto driving vehicle safety impact factors analysis and countermeasures[J]. Shanghai Motor, 2018(1): 33-37. doi: 10.3969/j.issn.1007-4554.2018.01.08 [84] MIRKO C. Automated driving: Challenges in the interplay between functional safety and safety of the intended functionality[M]. Berlin: [s.n.], 2018. [85] JOHN B. Safety argument framework for highly automated vehicles[EB/OL]. [2019-09-12]. http://safety.addalot.se/upload/2017/2-6-2%20JohnBirch.pdf. [86] SUSANNE E. Bosch case study: Application of SOTIF for ADAS[EB/OL]. [2019-09-15]. https://www.automotive-iq.com/events-sotif-conference-usa/downloads/partner-content-robert-bosch-a-case-study-application-of-sotif-for-adas. [87] ELROFAI H, PAARDEKOOPER J P, ERWIN D G, et al. Scenario-based safety validation of connected and automated driving[EB/OL]. [2019-11-10]. https://publications.tno.nl/publication/34626550/AyT8Zc/TNO-2018-streetwise.pdf. [88] BEV L, DAVID W. Some conservative stopping rules for the operational testing of safety critical software[J]. IEEE Transactions on software Engineering, 1997, 23(11): 673-683. doi: 10.1109/32.637384 [89] 车天伟, 马建峰, 王超, 等. 基于安全熵的多级访问控制模型量化分析方法[J]. 华东师范大学学报 (自然科学版), 2015(1): 172. CHE Tian-wei, MA Jian-feng, WANG Chao, et al. Quantitative analysis method of multilevel access control model based on security entropy[J]. Journal of East China Normal University (Natural Science Edition), 2015(1): 172. [90] LEX F. Human-centered autonomous vehicle systems: Principles of effective shared autonomy[EB/OL]. [2019-12-10]. https://arxiv.org/abs/1810.01835. [91] 胡云峰, 曲婷, 刘俊, 等. 智能汽车人机协同控制的研究现状与展望[J]. 自动化学报, 2019(7): 1261-1280. HU Yun-feng, QU Ting, LIU Jun, et al. Research status and prospect of human-machine cooperative control of intelligent vehicles[J]. Journal of Automatic Chemistry, 2019(7): 1261-1280. -

ISSN

ISSN

下载:

下载: