-

作为机器学习和人工智能领域发展最为迅速的研究方向,深度学习受到学术界和工业界的高度关注。深度学习是基于特征自学习和深度神经网络(DNN)的一系列机器学习算法的总称。目前深度学习的研究有了长足的发展,在传统特征选择与提取框架上取得了巨大突破,对包括自然语言处理、生物医学分析、遥感影像解译在内的诸多领域产生越来越重要的影响,并在计算机视觉和语音识别领域取得了革命性的成功。

当前,如何应用深度学习技术解决自然语言处理(NLP)相关任务是深度学习的研究热点。NLP作为计算机科学与人工智能交叉领域中的重要研究方向,综合了语言学、计算机科学、逻辑学、心理学、人工智能等学科的知识与成果。其主要研究任务包括词性标注、机器翻译、命名实体识别、机器问答、情感分析、自动文摘、句法分析和共指消解等。自然语言作为高度抽象的符号化系统,文本间的关系难以度量,相关研究高度依赖人工构建特征。而深度学习方法的优势恰恰在于其强大的判别能力和特征自学习能力,非常适合自然语言高维数、无标签和大数据的特点。为此,本文将对当前深度学习如何应用在NLP领域展开综述性讨论,并进一步分析其中的应用难点和未来可能的突破方向。

HTML

-

深度学习源于人工神经网络的研究。人工神经网络(artificial neural network, ANN)作为计算工具是由文献[1]引入。之后,Hebb自组织学习规则、感知机模型、Hopfield神经网络、玻尔兹曼机、误差反向传播算法和径向基神经网络等也相继被提出。文献[2]利用逐层贪心算法初始化深度信念网络,开启了深度学习的浪潮,指出深度学习的本质是一种通用的特征学习方法,其核心思想在于提取低层特征,组合形成更高层的抽象表示,以发现数据的分布规律。文献[2]的方法有效地缓解了DNN层数增加所带来的梯度消失或者梯度爆炸问题。随后文献[3]使用自动编码机取代深度信念网络的隐藏层,并通过实验证明了DNN的有效性。同时,研究发现人类信息处理机制需要从丰富的感官输入中提取复杂结构并重新构建内部表示,使得人类语言系统和感知系统都具有明显的层结构[4],这从仿生学的角度,为DNN多层网络结构的有效性提供了理论依据。

此外,深度学习的兴起还有赖于大数据和机器计算性能的提升。大数据是具有大量性、多样性、低价值密度性的数据的统称,深度学习是处理大数据常用的方法论,两者有紧密的联系。以声学建模为例,其通常面临的是十亿到千亿级别的训练样本,实验发现训练后模型处于欠拟合状态,因此大数据需要深度学习[5]。另外,随着图形处理器(graphics processing unit, GPU)的发展,有效且可扩展的分布式GPU集群的使用大大加速了深度模型的训练过程,极大地促进了深度学习在业界的使用。

目前,NLP应用逐渐成为深度学习研究中又一活跃热点。2013年,随着词向量word2vec[6]的兴起,各种词的分布式特征相关研究层出不穷。2014年开始,研究者使用不同的DNN模型,例如卷积网络,循环网络和递归网络,在包括词性标注、情感分析、句法分析等传统NLP应用上取得重大进展。2015年后,深度学习方法开始在机器翻译、机器问答、自动文摘、阅读理解等自然语言理解领域攻城略地,逐渐成为NLP的主流工具。在未来几年,深度学习将持续在自然语言理解领域做出巨大影响[7]。

-

分布式特征表示(distributional representation)是深度学习与NLP相结合的切入点,这些分布式特征是通过神经网络语言模型学习得到的。

-

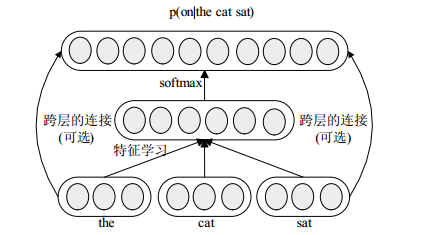

语言模型是计算任意词序在文本中出现的概率的统计模型,是NLP的基础性课题,对语音识别、词性标注、机器翻译、句法分析等研究都有至关重要的作用。神经网络生成的语言模型称为神经网络语言模型,由文献[8]提出。之后文献[9]等对神经网络语言模型进行了深入研究,其成果引起广泛关注。文献[9]构建的神经网络语言模型如图 1所示,仅有一层隐藏层络,使用softmax计算词序的概率,并通过随机梯度上升法最大惩罚似然估计以训练网络参数。

在文献[9]的基础上,文献[10]从语料中自动学习出词的层次结构,并结合受限玻尔兹曼机提出了HLBL模型。文献[11]又在文献[10]的基础上提出SENNA模型,通过对一个句子的合理性进行打分以训练词向量,在多项NLP任务上成功应用。之后文献[12]复现了HLBL模型和SENNA模型,并比较了二者的优劣。文献[13]在SENNA的基础上,对每个词向量的训练加入了相应的全局信息,并用多个词向量对应一个词以解决词的多义性问题。不同于其他人的工作,文献[6]使用了循环神经网络来训练语言模型,并在2013年开源word2vec。从HLBL模型开始,研究语言模型的目的不再是获得真正的语言模型而在于获得可用的词向量。

-

词向量通常指通过语言模型学习得到的词的分布式特征表示,也被称为词编码,可以非稀疏的表示大规模语料中复杂的上下文信息。目前最为人所熟知的有以下6种公开发布的词向量:HLBL、SENNA、Turian’s、Huang’s、word2vec、Glove[14]。文献[15]发现word2vec词向量间具有语义上的联系,即词向量的加减存在明显的语义关系,并在SemEval 2012 task上取得超过Turian词向量的结果,证明了word2vec的高可用性。文献[16]证明了当word2vec词向量使用skip-gram模型配合负采样技术训练时,与基于SVD的共现矩阵分解的词向量具有相同的最优解。同年文献[14]提出了Glove词向量,并证明基于矩阵的词向量可以取得远比word2vec优异的性能,但根据文献[17]提出的测评指标显示word2vec在大部分测评指标优于Glove和SENNA。除了使用词向量解决当前自然语言测评任务外,也有许多学者对词向量进行了其他广泛而深入地研究,如文献[18]研究了语种的差异对词向量的影响,文献[19]就如何生成更好的词向量进行深入讨论,文献[20]利用词向量计算了文档的相似度。

2.1. 神经网络语言模型

2.2. 词向量

-

深度信念网络(deep belief nets, DBN)是由受限玻尔兹曼机(restricted Boltzmann machine, RBM)堆叠而生成的一种模型。DBN通过训练网络的权重,使网络具有还原输入层训练数据的能力。DBN采用的训练步骤如下:

1) 当前层RBM为可见层则接收原始数据输入,否则接收上一层RBM的输出,并训练当前层RBM;

2) 网络总层数满足要求则执行步骤4),否则置下一层RBM为当前层;

3) 重复步骤1)和步骤2);

4) 微调网络,使用有监督学习算法将模型收敛到局部最优解。

文献[21]讨论了RBM和DBN网络的层数设置、网络泛化能力以及可能的扩展,并使用自编码器(auto-encoder, AE)取代DBN网络中每一层的RBM,由此简单堆叠数个AE得到的神经网络在文献[3]中被称为堆叠自编码网络(stacked auto-encoders, SAE)。目前SAE有两种典型的改进:1)在隐藏神经元加入稀疏性限制,使网络中大部分神经元处于抑制状态,形成稀疏自编码网络[22];2)在SAE网络的编码过程加入噪音,增加SAE网络的抗噪性,形成堆叠降噪自编码网络[23]。SAE网络由于强大的特征学习能力[24],被广泛使用在多模态检索[25]、图像分类[26]、情感分析[27]等诸多领域中。

-

循环神经网络(recurrent neural networks, RNN)是隐藏层和自身存在连接的一类神经网络。相较于前馈神经网络,循环神经网络可将本次隐藏层的计算结果用于下次隐藏层的计算,因此可以用来处理时间序列问题,比如文本生成[28]、机器翻译[29]和语音识别[30]。循环神经网络的优化算法为BPTT算法(backpropagation through time)[31]。由于梯度消失的原因,循环神经网络的反馈误差往往只能向后传递5~10层,因此文献[32]在循环神经网络的基础上提出长短时记忆模型(long-short term memory, LSTM)。LSTM使用Cell结构记忆之前的输入,使得网络可以学习到合适的时机重置Cell结构。LSTM有诸多结构变体,文献[33]给出了其中8种流行变体的比较。文献[34]则在超过1万种循环网络架构上进行了测试,发现并列举在某些任务上可能比LSTM更好的架构。

循环神经网络和LSTM具有许多NLP应用。文献[35]将门控循环网络用于情感分析,在IMDB等影评数据集上较SVM和CNN方法在准确率上有5%左右的提升。文献[36]使用双向LSTM网络结合卷积神经网络和条件随机场解决词性标注和命名实体识别问题,分别取得97.55%和91.21%的最好结果。

-

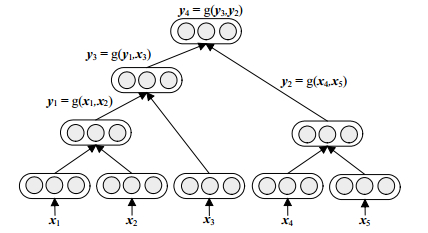

递归神经网络(recursive neural networks)是利用树形神经网络结构递归构造而成,用于构建句子语义信息的深度神经网络[37]。递归神经网络所用的树形结构一般是二叉树,典型的递归神经网络如图 2所示。对于句法分析任务,图 2中x表示词向量,y表示合并而成的子树向量。定义全局参数W,b和U,以及子树合理性评分变量s,对于合并节点(x1, x2→y1),有:

随机初始全局参数,使用贪心算法,相邻的叶子节点(或子树)两两合并成子树并计算评分,取分最高者合并,直到最终形成句法树。句法分析任务是有标定的,即存在一个正确的句法树构造,因此训练目标是优化网络参数使得整个网络的评分损失最小。除了句法分析,递归神经网络还可用于关系分类[38]和情感分析[39]中。

卷积神经网络(convolutional neural networks, CNN)是由文献[40]提出并由文献[41]改进的深度神经网络。在一般前馈神经网络中,输入层和隐藏层之间采用全连接结构,而在CNN中每一个卷积层节点只与一个固定大小的区域有连接,连接的权重矩阵称为卷积核。池化(pooling)是CNN所采用的另一项关键技术,在固定大小的区域使用平均值或最大值代替原有的矩阵区域,既减少了特征数目又增加了网络的鲁棒性。

目前CNN在NLP领域的应用有许多新的尝试。文献[6]将CNN用于语义角色标注,文献[42]使用字符作为语义特征,采用大规模文本语料训练CNN模型用于本体分类、情感分析和文本分类,所用的CNN模型如图 3所示。

3.1. 深度信念网络和堆叠自编码

3.2. 循环神经网络与长短时记忆模型

3.3. 递归神经网络和卷积神经网络

-

深度学习方法在诸多NLP领域中得到广泛应用。在机器翻译领域,文献[43]将DNN和词编码用于机器翻译,困惑度下降15%。文献[44]利用双语词向量作为特征,对应的BLEU值提升了0.48。同时用于解决机器翻译问题(如词语对齐[45]、语序问题[46])的循环神经网络也被广泛用于文本生成。文献[28]只使用字符序列训练循环神经网络文本生成器,效果接近加入了大量人工规则的文本生成系统。关于如何使用循环网络生成文本,文献[47-48]提供了丰富有趣的案例。近来,关于图片的解释性文本生成也受到广泛关注[49]。

词性标注、组块分析、语义角色标注和命名实体识别在SENNA系统[11]中给出了统一的解决框架,即基于词向量特征的深度网络判别模型。在此基础上,文献[50]将字符特征加入词性标注任务中,准确率略有提升(0.3%)。组块分析与词性标注任务类似,算法准确率的提升也已陷入瓶颈[51]。对于语义角色标注任务,文献[52]提出使用半监督学习训练词编码,有效地提升了准确率。命名实体(人名、地名、时间和数字等的识别)是许多NLP应用的基础,受到众多学者关注。相较于传统的特征工程方法[53],文献[54]使用了词和字符作为特征,并将LSTM与条件随机场相结合,采用dropout策略,在实体识别上取得了更好的识别率。

在结构句法分析上,文献[55]对比了不同递归神经网络对结构句法分析的影响,发现递归神经网络句法分析的最好效果也略逊于斯坦福大学的句法分析工具(Stanford Parser)。因此文献[38]将Stanford Parser原理与递归神经网络相结合,使得算法准确率进一步提升(0.855~0.904)。至于文本分类任务,使用字符特征和词编码的卷积网络[11]、基于张量组合的递归网络模型[39]和基于树形结构的循环网络模型[56]是当前卓有成效的混合深度网络。

机器问答是一项极其困难的NLP任务。文献[57]给出了使用神经网络求解机器问答的一般流程。文献[58]提出了记忆神经网络,以经过语义分析和人为筛选的先验事实文本为输入,有监督学习循环神经网络权重。近年来针对图像内容的多模态问答任务也受到了广泛的关注[59]。

在国内,将深度学习方法应用于NLP的研究也越来越多的受到学者的关注。文献[60]将自适应递归神经网络用于情感分析,文献[61]将情感信息直接嵌入词向量并用于情感分类,文献[62]将DBN用于代词指代消极。国内研究团队也不约而同地使用深度学习解决NLP热点问题。华为诺亚方舟实验室将CNN用于机器翻译[63]和多模态问答[64]。微软亚洲研究院致力于利用不同的深度网络实现机器问答、机器翻译和聊天机器人[65-67]。清华大学自然语言处理与社会人文计算实验室将深度学习方法用于机器翻译,关系抽取以及知识的分布式表示中[68-70],苏州大学自然语言组则侧重于中文信息抽取和多语情感分析[71-72]。哈工大、中科院、北京大学等高校的自然语言组也屡次在国际会议上发表高水平学术论文[73-75],越来越多的中国学者对深度学习结合NLP领域的研究做出了卓越的贡献。

-

尽管深度学习已经在诸多应用领域取得巨大成功,但深度学习作为一项正在蓬勃发展的新兴技术,仍然有许多研究难点需要攻克。其中最大的瓶颈在于,除了仿生学的角度,目前深度学习的理论依据还处于起步阶段,大部分的研究成果都是经验性的,没有足够的理论来指导实验,研究者无法确定网络架构、超参数设置是否已是最优的组合。除此之外,目前仍没一种通用的深度网络或学习策略可以适用于大多数的应用任务,因此深度学习领域的研究者正在不断尝试新的网络架构和学习策略,以提升网络的泛化性能。

目前,深度学习用于NLP领域的主要步骤可以归结为如下3步:1)将原始文本作为输入,自学习得到文本特征的分布表示。2)将分布式向量特征作为深度神经网络的输入。3)针对不同的应用需求,使用不同的深度学习模型,有监督的训练网络权重。目前深度学习结合NLP的应用前景及其广泛。深度学习模型在文法分析和信息抽取等研究的基础上,被灵活地运用在多语言机器翻译、机器问答、多模态应用、聊天机器人等一系列自然语言任务上。

然而深度学习在NLP研究上尚未取得像语音识别和计算机视觉那样巨大的成功。本文认为深度学习方法在NLP应用上的难点和可能的突破统一于以下4个方面:1)可广泛适用于不同NLP任务的通用语义特征。2)超参数设置相关研究。3)新型网络架构和学习策略的提出和研究(如注意力模型[76])。4)基于自然语言的逻辑推理和多模态应用。前者将提升机器的“智能”,后者扩展“智能”的应用领域。

综上,本文认为深度学习方法在NLP领域已经有许多很有价值的尝试,在不久的将来,将取得更大的成功。但未来依旧充满了挑战,值得更多的研究者进行广泛而深入地研究。

ISSN

ISSN

DownLoad:

DownLoad: